Sound is actually an energy wave, so there are also characteristics of frequency and amplitude, the frequency corresponds to the time axis, and the amplitude corresponds to the level axis. We know that in the audible sound, the frequency band of the voice signal is between 80 Hz and 3400 Hz, and the frequency band of the music signal is between 20 Hz and 20 kHz. Voice (voice) and music are the main objects of multimedia technology processing. Generally, the sound waves that can be heard by the human ear at frequencies from 20 Hz to 20 kHz are called audible sounds, those below 20 Hz become infrasound, those below 20 kHz are ultrasonic, and in multimedia technology only audible parts are studied.

One: The three main steps of digitizing audio

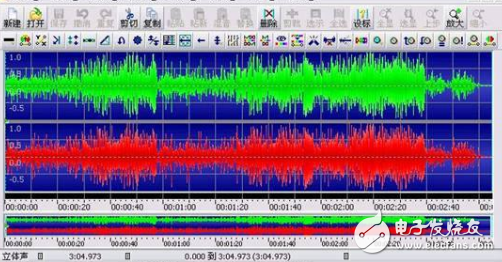

Since the analog sound is continuous in time, the sound signal collected by the microphone needs to be digitized before it can be processed by the computer. Usually we use PCM coding (pulse code modulation coding), which converts continuously changing analog signals into digital codes through three steps of sampling, quantization and coding.

1, sampling

Sampling is the amplitude of the sound that is read once every interval. The number of samples per unit time is called the sampling frequency. Obviously, the higher the sampling frequency, the closer the data points of the discrete amplitude obtained are to the continuous analog audio signal curve, and the larger the amount of data sampled.

In order to ensure that the digitized audio can be accurately (reversibly) restored to analog audio for output, the sampling theorem requires that the sampling frequency must be greater than or equal to twice the highest frequency in the spectrum of the analog signal.

Commonly used audio sampling rates are: 8 kHz, 11.025 kHz, 22.05 kHz, 16 kHz, 37.8 kHz, 44.1 kHz, 48 kHz.

For example, the frequency of the voice signal is in the range of 0.3 to 3.4 kHz. With the sampling frequency (fs) of 8 kHz, a sampling signal capable of replacing the original continuous voice signal can be obtained, and the sampling frequency of the general CD acquisition is 44.1 kHz.

2. Quantification

Quantization is the conversion of the amplitude of the sampled sound signal into a digital value used to represent the signal strength.

Quantization accuracy: How many binary bits are used to represent each sample value, also known as the number of quantization bits. The quantized bits of the sound signal are typically 4, 6, 8, 12 or 16 bits.

It can be known from the sampling frequency and the quantization precision that the audio coding can only be infinitely close to the signal of the natural world. In computer applications, the highest fidelity level is the PCM coding, which is usually a lossless coding.

3, coding

A sampling rate of 44.1kHz, quantization accuracy of 16bit, two-channel PCM coded output, its data rate is 44.1K & TImes; 16 & TImes; 2 = 1411.2 Kbps, storage requires 176.4KB space for one second, about 1 minute It is 10.34M, so in order to reduce the cost of transmission or storage, it is necessary to encode and compress the digital audio signal.

So far, the digital rate of the audio signal has been reduced to 32 to 256 kbit/s, and the voice can be as low as 8 kbit/s.

The purpose of compressing the encoding of digital audio information is to minimize the amount of data of the digital audio information without affecting the use of the person. Usually measured by the following six attributes:

- bit rate;

- the bandwidth of the signal;

- subjective/objective voice quality;

-delay;

- computational complexity and memory requirements;

- sensitivity to channel errors;

In order for the encoded audio information to be widely used, a standard algorithm is required for encoding the audio information. Traditional conference television equipment mainly adopts audio standards such as G.711, G.722, G.728 and AAC_LD recommended by ITU-T.

In the introduction of audio equipment, we will often see a variety of supported audio formats and protocols in the product documentation. Let's take a closer look at the common audio protocols for Bluetooth headsets and conference TVs.

Editor's recommendation: What are the relevant international standards in the audio and video fields?

What are the common audio protocols for Bluetooth headsets?

HFP: HFP (Hands-free Profile) allows Bluetooth devices to control calls, such as answering, hanging up, rejecting, voice dialing, etc. Rejecting and voice dialing depends on whether the Bluetooth headset and the phone are supported. Car kits in cars are a common use case. The car kit will be connected to the phone and used to make and receive calls. It is more common to use a headset to make and receive calls when you connect your wireless headset to your phone.

HSP: Headphone mode (HSP) is used to support hands-free mode (HFP) between Bluetooth headset and mobile phone to make car hands-free communication and in-car mobile phone

A2DP: The full name of A2DP is the Advanced Audio DistribuTIon Profile Bluetooth Audio Transmission Model Protocol! A2DP is able to use the chip inside the headset to stack data to achieve high definition of sound. However, the headset that does not support A2DP is the Lan Ao headset stereo. The basic requirement for stereo implementation is two-channel, so the mono Bluetooth headset cannot achieve stereo. The sound can reach 44.1kHz, and the average earphone can only reach 8kHz. If your phone supports Bluetooth, you can use the A2DP headset as long as you load the A2DP protocol. There are also consumers who see the technical parameters mentioned Bluetooth V1.0 V1.1 V1.2 V2.0 - these are the technical versions of Bluetooth, which refers to the speed of transmission via Bluetooth, whether they support A2DP depends on the Bluetooth products Does the manufacturer use this technology?

AVRCP: AVRCP is designed to provide a standard interface for controlling TV, Hi-Fi devices, and more. This profile is used to permit a single remote control device (or other device) to control the A/V devices that all users can access. It can be used with A2DP or VDP. AVRCP defines how to control the characteristics of streaming media. This includes pause, stop, start playback, volume control, and other types of remote control operations. AVRCP defines two roles, the controller and the target device.

APTX: AptX is a compression technology, such as ALAC, which supports losslessness. However, when it is used in Bluetooth technology, it is still detrimental for various reasons, but it is a relatively high bit rate.

OPP

The Bluetooth communication program part is used to transmit data objects between devices. OPP Profile: Object Push Profile is divided into OPPC (client) and OPPS (server) profiles by OPP profile. The difference between these two profiles is that only the client can The process of initiating the data transmission, but in the scenario where the accessory device communicates with the mobile phone, both the mobile phone initiates the data transmission request and the device side initiates the transmission request, so the two profiles of OPPC and OPPS are implemented in the device.

PBAP

Phonebook Access Profile

What are the common audio protocols for conference TV?

1. ITU-T G.728

Recommended standard for the encoding of telephone sound signals issued by ITU-T in 1992. Using LD-CELP coding, the sampling rate is 8KHz, and the sound signal is transmitted at 16kb/sec. The transmission delay time is extremely short, and the algorithm coding delay is only 0.625 ms.

2. ITU-T G.711

The standard was published in 1972, and its speech signal coding is non-uniform quantization PCM. The sampling rate of speech is 8KHz, and each sample uses 8bit quantization, and the output data rate is 64kbps. This narrowband encoding supports compression of 300 to 3,400 Hz audio. However, although the compression quality is good, the bandwidth consumed is relatively large, and it is mainly used for digital telephones on digital PBX/ISDN.

3. ITU-T G.722

The ITU-T G.722 standard is the first standardized wideband speech coding algorithm for 16 KHZ sampling rate, which was defined by CCITT as a standard in 1984 and is still in use today. The .G.722 codec receives 16-bit data (bandwidth from 50 Hz to 7 kHz) at 16 kHz and compresses it to 64, 56 and 48 Kbit/s with a total delay of approximately 3 ms, providing more Good call quality.

The advantage of G.722 is that the delay and transmission bit error rate is very low, and there is no patented technology and the cost is low. Therefore, G.722 is widely used in wireless communication systems, VoIP manufacturers, personal communication services, and video conferencing applications.

4. G.722.1

G.722.1 is based on Polycom's third-generation Siren 7 compression technology and was approved by ITU-T in 1999 as the G.722.1 standard. G.722.1 uses 16 KHZ sampling frequency, 16-bit data quantization, supports audio sampling from 50 Hz to 7 kHz and compresses it to 32 and 24 Kbit/s. It uses a 20 ms frame to provide 40ms of algorithm latency.

G722.1 achieves lower bitrate and greater compression than the G.722 codec. The goal is to achieve roughly the same quality as G.722 at about half the bit rate. This coded license requires authorization from Polycom.

5. G722.1 Annex C

G722.1 Annex C is based on Polycom's Siren 14 compression technology, which uses a 32kHz sampling frequency to support audio samples from 50 Hz to 14 kHz and compresses them to 24, 32 or 48 kbps. With a 20ms frame, the 40ms algorithm delay is provided.

In mid-2005, the International Telecommunications Union (ITU) approved the Polycom Siren 14TM technology as the new standard for 14 kHz ultra-wideband audio coding. At the same time, it enters G.722.1 Annex C as an ITU-T recommendation. G722.1 Annex C has the advantages of low computing power and low bandwidth. Suitable for handling voice, music and natural sounds.

6, AAC-LD

AAC (Advanced Audio Coding) is an audio compression format developed by the Fraunhofer Institute (creator of MP3 format), DOLBY laboratory and AT&T (American Telephone and Telegraph Corporation). It is MPEG. Part of the -2 specification and became an international standard in March 1997. With the MPEG-4 standard being formed in 2000, MPEG2 AAC has also been used as the core encoding technology, and has added some new encoding features, also known as MPEG-4 AAC.

The MPEG-4 AAC family currently has nine encoding specifications, and AAC-LD (Low Delay) is used for encoding at low bit rates. It supports 8K ~ 48K sampling rate, can output audio close to CD sound quality at 64Kbps, and supports multiple sound channels, AAC-LD algorithm delay is only 20ms.

AAC is more powerful because of its modular design. The framework of its own structure can be filled with new things, which makes the cores of different developments merge with each other, and it is possible to absorb the essence from each other.

7. Comparison of main parameters of various audio protocols:

Sampling frequency supports audio bandwidth output rate minimum algorithm delay

G711 8KHz 300 Hz ~ 3,400 Hz 64 Kbps "1ms

G722 16kHz 50 Hz ~ 7 kHz 64 Kbps 3ms

G722.1 16kHz 50 Hz ~7 kHz 24, 32 Kbps 40ms

G722.1 C 32kHz 50 Hz~14 kHz 24, 32, 48Kbps 40ms

AAC-LD 48kHz 20 Hz-20kHz 48~64 Kbps 20ms

Third, AAC_LD and G722. Annex C advantages and disadvantages contrast:

G722.1 C AAC_LD

The sampled audio frequency range supports 50 Hz to 14 kHz, close to the CD quality, but the high frequency portion is lost.

Supports sampling from 20 Hz to 20 kHz in full frequency, and the audio is closer to CD quality.

The output code rate is 24, 32, 48 Kbps, and the bandwidth is lower than AAC-LD, but at the expense of high frequency.

48 to 64 Kbps and support for output greater than 64 Kbps, providing better audio quality.

The algorithm complexity algorithm has low complexity, the CPU usage is slightly better than the AAC-LD modular design, and the function is more powerful. It has special chip support such as TI.

The minimum delay is 20ms framed frame, and the 40ms algorithm delays 20ms algorithm delay, which is better than G722.1 C.

Multi-channel support for dual-channel AAC support for up to 48 tracks, 15 low-frequency tracks

The standard versatility G722.1-C was developed by Polycom and requires Polycom license. It is currently only used by Polycom and very few conference TV vendors. As the core standard of MPEG4, it has been supported by Apple, Nokia, Panasonic, etc., and has been adopted by many conference TV manufacturers such as Ted, and has a broader application prospect.

At the same sampling frequency, AAC-LD can provide better sound quality than G722.1 C, MP3 and so on. AAC-LD realizes the shortest delay in ultra-wideband audio coding and guarantees the sound quality close to CD, achieving the best combination of sound quality, bit rate and delay. It is the best choice in conference TV field.

Editor's Choice: Analog Devices talks about audio system standards and protocols

Wire harnesses are commonly used in the electronics industry, the automotive industry, in the manufacture of construction machinery and industrial equipment, as well as in the manufacture of white goods such as washing machines and dryers, refrigerators, and other household appliances.A industrial wire harness is typically designed to simplify the manufacture of a larger component, and is designed based on the geometric and electrical requirements of the equipment it is to be installed in.

Industrial Cable Harness,Mechanical Cable Assembly,Industrial Cable Harness,Mechanical Cable Assembly,Accessories Service

ETOP WIREHARNESS LIMITED , https://www.wireharness-assembling.com